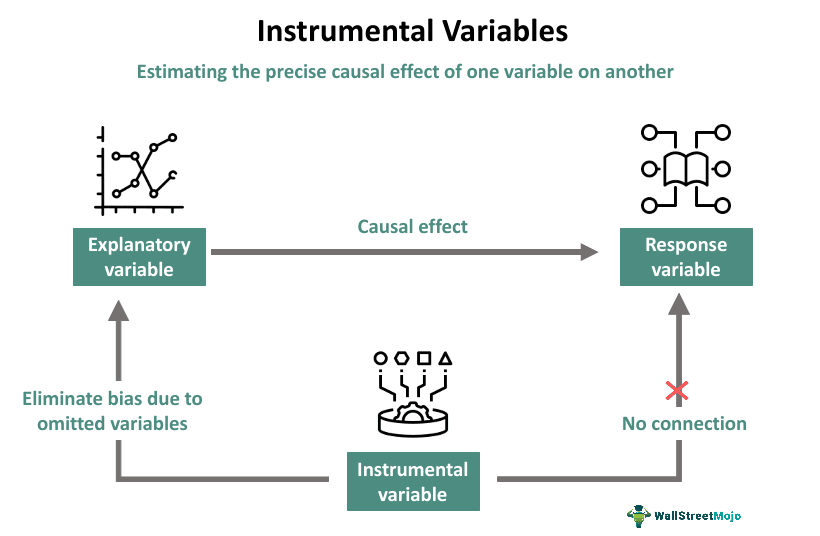

The concept of an instrumental variable (IV) is a powerful statistical tool, primarily used in econometrics and other fields concerned with causal inference, to address the pervasive problem of endogeneity. Endogeneity arises when the independent variable of interest is correlated with the error term in a regression model, leading to biased and inconsistent estimates of the causal effect. This correlation can stem from various sources, including omitted variables, simultaneity, or measurement error. Without a way to isolate the truly exogenous variation in the independent variable, establishing a genuine causal link between variables becomes a formidable challenge. Instrumental variables offer a sophisticated solution by leveraging a third variable that meets specific criteria to “instrument” the endogenous variable, thereby allowing for the estimation of its causal impact.

The Challenge of Endogeneity in Causal Inference

Before delving into the mechanics of instrumental variables, it’s crucial to understand the problem they aim to solve: endogeneity. In a typical regression model, we aim to estimate the relationship between an independent variable ($X$) and a dependent variable ($Y$), often represented as $Y = beta0 + beta1 X + epsilon$, where $beta1$ is the causal effect we’re interested in. However, this model assumes that the error term, $epsilon$, is uncorrelated with $X$. When this assumption is violated, meaning $Cov(X, epsilon) neq 0$, the estimator for $beta1$ becomes biased and inconsistent.

Sources of Endogeneity

Several common scenarios lead to endogeneity, hindering our ability to draw accurate causal conclusions:

Omitted Variable Bias

This is perhaps the most frequent cause of endogeneity. If a variable that influences both $X$ and $Y$ is not included in the regression model, its effect is absorbed into the error term. This omitted variable is then correlated with $X$, violating the core assumption. For instance, in studying the effect of education ($X$) on income ($Y$), a person’s inherent ability is likely correlated with both how much education they pursue and their future income. If ability isn’t controlled for, its influence will be embedded in the error term and spuriously attributed to education.

Simultaneity and Reverse Causality

In some relationships, the direction of causality is not clear-cut. $X$ might influence $Y$, but $Y$ might also influence $X$. This feedback loop creates a correlation between $X$ and the error term. Consider the relationship between crime rates ($X$) and the number of police officers ($Y$). While more police might deter crime, areas with higher crime rates may also warrant more police presence, leading to simultaneity.

Measurement Error

If the independent variable $X$ is measured with error, this error is often correlated with the true value of $X$. This error then becomes part of the error term in the regression, leading to a correlation between $X$ and $epsilon$. For example, if we are trying to measure the effect of hours worked ($X$) on productivity ($Y$), and the reported hours are often inaccurate, this measurement error can bias the estimated effect of hours worked.

The Logic and Requirements of an Instrumental Variable

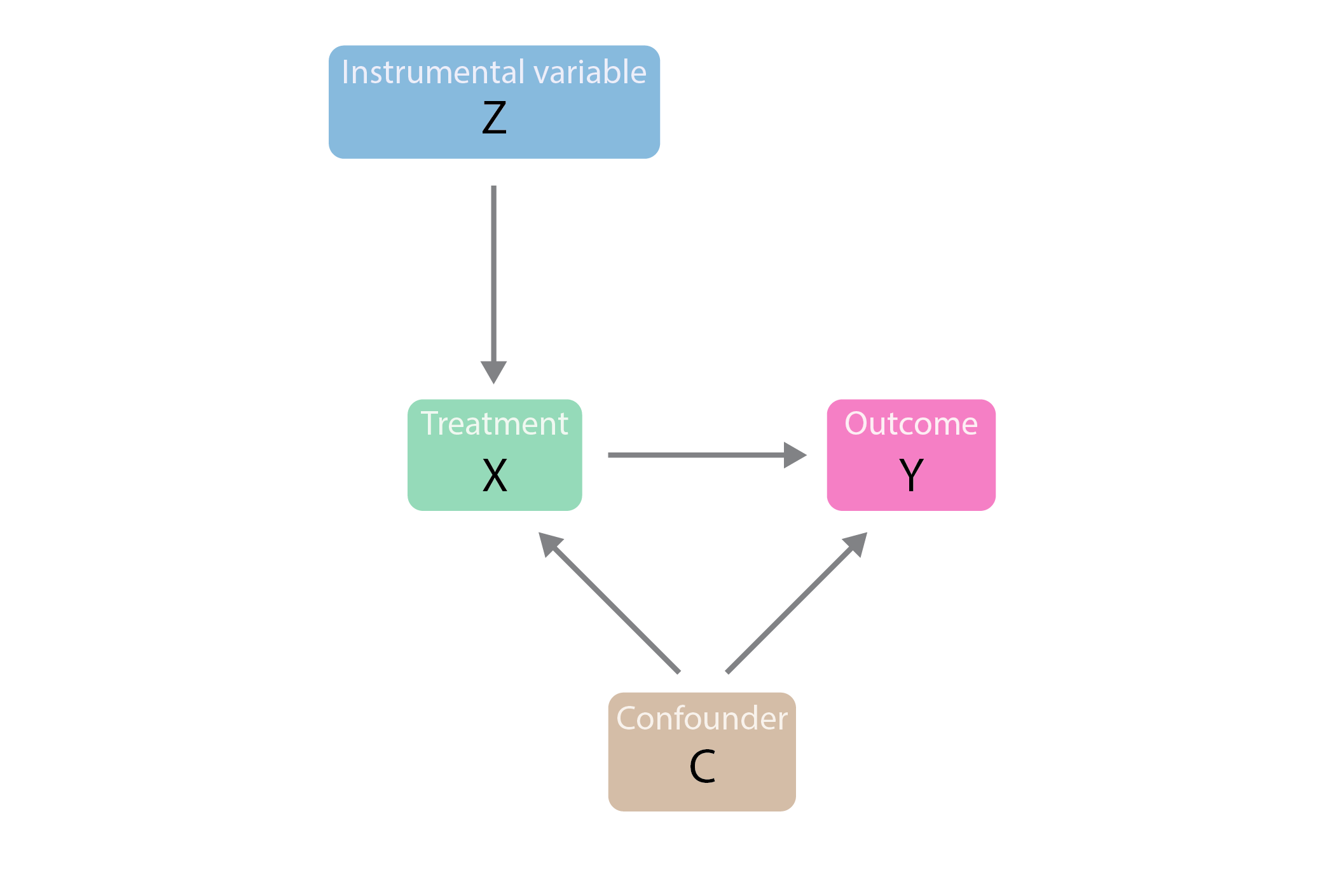

An instrumental variable, denoted as $Z$, is a variable that can help us overcome endogeneity. The core idea is that $Z$ affects $Y$ only through its effect on $X$, and $Z$ is not directly correlated with the error term $epsilon$. This allows us to use the variation in $X$ that is induced by $Z$ to estimate the causal effect of $X$ on $Y$.

The Two Core Conditions for a Valid Instrument

For a variable $Z$ to be a valid instrumental variable for $X$, it must satisfy two fundamental conditions:

1. Relevance Condition (or First-Stage Condition)

The instrumental variable $Z$ must be correlated with the endogenous independent variable $X$. In statistical terms, this means $Cov(Z, X) neq 0$. If $Z$ has no influence on $X$, then the variation in $X$ that $Z$ “explains” is zero, and thus $Z$ cannot help us isolate any part of $X$’s variation to study its effect on $Y$. This condition is often assessed by examining the strength of the relationship between $Z$ and $X$ in a preliminary regression (the “first stage” regression): $X = pi0 + pi1 Z + v$. A statistically significant and practically meaningful value for $pi1$ indicates that the relevance condition is met. Weak instruments (where $pi1$ is small) can lead to biased and imprecise estimates.

2. Exclusion Restriction (or Exogeneity Condition)

The instrumental variable $Z$ must be uncorrelated with the error term $epsilon$ in the model for $Y$. That is, $Cov(Z, epsilon) = 0$. This is the most critical and often the most difficult condition to satisfy and justify. It implies that $Z$ affects $Y$ only through its effect on $X$ and has no direct or indirect influence on $Y$ outside of its path through $X$. If $Z$ is correlated with $epsilon$, then the variation in $X$ induced by $Z$ is also contaminated by factors that directly affect $Y$, rendering the instrument invalid.

Additional Considerations for Instruments

Beyond the two core conditions, several other aspects are important when selecting and using instrumental variables:

Exogeneity with Respect to Unobserved Confounders

The exclusion restriction essentially means that $Z$ must be exogenous with respect to any unobserved factors that affect $Y$ and are correlated with $X$. This is where the challenge often lies, as identifying a variable that influences $X$ but is truly independent of all other determinants of $Y$ can be difficult.

Plausibility of the Causal Path

The argument for the exclusion restriction relies on the plausibility of the causal pathway: $Z rightarrow X rightarrow Y$. Researchers must provide strong theoretical or empirical justification for why $Z$ would not have an independent effect on $Y$.

Methods for Estimating with Instrumental Variables

Once a valid instrumental variable is identified, several methods can be employed to estimate the causal effect. The most common and foundational method is Two-Stage Least Squares (2SLS).

Two-Stage Least Squares (2SLS)

2SLS is a two-step procedure that effectively addresses endogeneity. It’s widely used because it’s relatively straightforward to implement and interpret.

Stage 1: Predicting the Endogenous Variable

In the first stage, the endogenous independent variable $X$ is regressed on the instrumental variable(s) $Z$ and all exogenous variables in the model (including any variables that were originally in the main equation for $Y$ but are not endogenous). The predicted values of $X$ are obtained from this regression. These predicted values, denoted as $hat{X}$, represent the variation in $X$ that is explained by the instrument(s).

$$ X = pi0 + pi1 Z + text{exogenous variables} + v $$

$$ hat{X} = pi0 + pi1 Z + text{exogenous variables} $$

The key insight here is that $hat{X}$ is, by construction, uncorrelated with the error term $epsilon$ in the original model for $Y$, provided the instrument is valid. This is because the variation in $hat{X}$ comes solely from $Z$ and the other exogenous variables, and $Z$ is assumed to be exogenous with respect to $epsilon$.

Stage 2: Regressing the Dependent Variable on the Predicted Endogenous Variable

In the second stage, the original dependent variable $Y$ is regressed on the predicted values of $X$ ($hat{X}$) obtained from the first stage. The coefficients from this second-stage regression are the 2SLS estimates.

$$ Y = beta0 + beta1 hat{X} + epsilon $$

The coefficient $beta_1$ from this second-stage regression is the 2SLS estimate of the causal effect of $X$ on $Y$. It represents the effect of the variation in $X$ that is accounted for by the instrumental variable(s).

Other IV Estimation Techniques

While 2SLS is the most common, other related methods exist:

Limited Information Maximum Likelihood (LIML)

LIML is another estimator for instrumental variables. It aims to find the parameters that maximize the likelihood function given the model, subject to the identifying restrictions. In cases of weak instruments, LIML can sometimes be preferred to 2SLS as it tends to have better asymptotic properties, though it can be more complex to implement and interpret.

Generalized Method of Moments (GMM)

GMM is a more general framework that encompasses 2SLS and LIML as special cases. It is particularly useful when there are more instruments than endogenous variables (overidentification) or when dealing with panel data. GMM provides a flexible way to combine information from multiple moment conditions, which are derived from the exogeneity assumptions of the instruments.

Applications and Challenges of Instrumental Variables

The utility of instrumental variables spans across numerous disciplines, but finding and validating appropriate instruments is rarely a trivial task.

Real-World Applications

Instrumental variables have been instrumental in making causal claims in various fields:

Economics

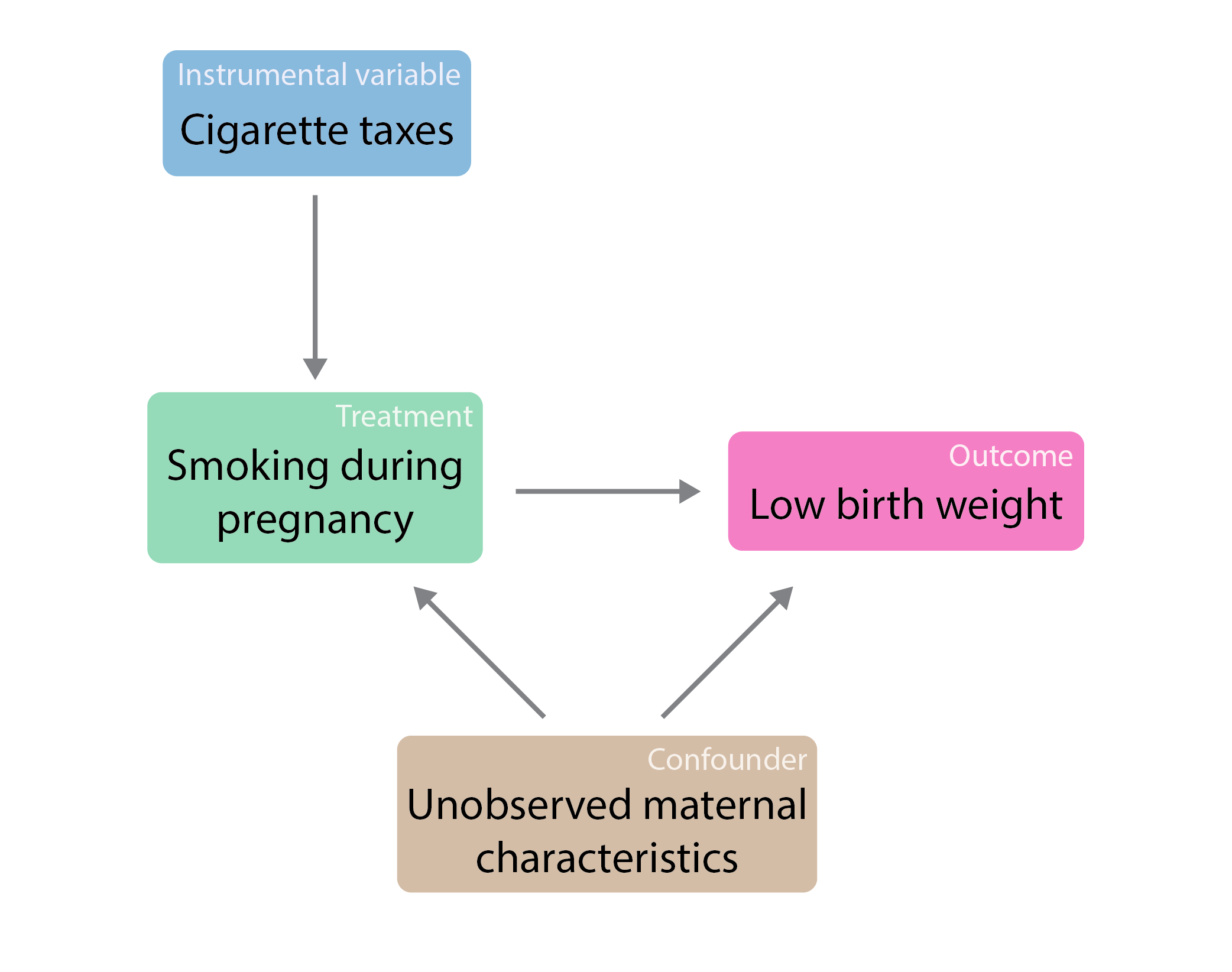

In economics, IVs are widely used to estimate the causal impact of education on earnings, the effect of schooling on health outcomes, the influence of trade policies on economic growth, and the effects of minimum wage laws on employment. For instance, a classic study by Angrist and Krueger (1991) used quarter of birth as an instrument for years of schooling to estimate the causal effect of education on wages, arguing that quarter of birth is unlikely to directly affect wages but might influence schooling decisions due to compulsory attendance laws.

Health Sciences

In public health, IVs can be used to study the effects of lifestyle choices or interventions on health outcomes, especially when randomized controlled trials are not feasible. For example, genetic predispositions or variations in healthcare access could be used as instruments to study the impact of certain health behaviors or treatments.

Political Science

Researchers have used IVs to analyze the impact of political institutions or reforms on various outcomes, such as economic development or social welfare. Geographic variations in political traditions or historical events could serve as potential instruments.

Challenges in Practice

Despite their power, instrumental variables are associated with significant challenges:

Finding Valid Instruments

The most significant hurdle is identifying an instrument that satisfies both the relevance and exclusion restriction conditions. This often requires deep domain knowledge, careful consideration of theoretical relationships, and robust empirical justification. The “plausibility” of an instrument is often debated.

Weak Instruments

If the instrument is only weakly correlated with the endogenous variable, the 2SLS estimates can be highly imprecise and even severely biased in finite samples, despite satisfying the asymptotic conditions. This phenomenon is known as the “weak instrument problem.” Statistical tests exist to assess instrument strength, such as the first-stage F-statistic.

Overidentification Tests

When more instruments are available than the number of endogenous variables, the model is overidentified. This allows for tests of the overidentifying restrictions, which can help to assess the validity of the instruments. If the overidentification test rejects the null hypothesis of instrument validity, it suggests that at least one of the instruments is likely invalid.

Exogeneity of Other Variables

It is also important that any other exogenous variables included in the model are truly exogenous and uncorrelated with the error term. Violations of exogeneity for other covariates can still lead to biased estimates.

In conclusion, instrumental variables provide a critical methodology for navigating the complexities of causal inference in the presence of endogeneity. By isolating exogenous variation in an endogenous variable, IVs enable researchers to move beyond mere correlation and towards more robust claims about causal relationships, enriching our understanding across a wide spectrum of academic disciplines.