In the vast and intricate landscape of mathematics, understanding fundamental concepts is paramount to unlocking deeper insights and solving complex problems. Among these foundational ideas, the concept of a “minimum” holds a significant place. While seemingly straightforward, the mathematical definition and application of a minimum extend far beyond simple identification of the smallest number in a set. It forms the bedrock for optimization, analysis, and modeling across numerous scientific and engineering disciplines.

This exploration delves into the multifaceted nature of mathematical minima, from their basic definition to their critical roles in various fields, particularly as they relate to the cutting-edge technologies that are rapidly transforming our world.

The Fundamental Definition of a Minimum

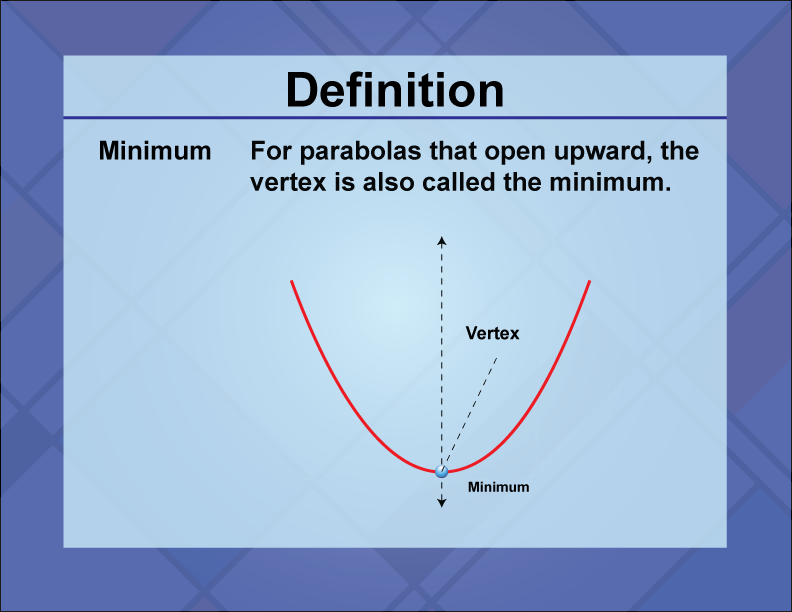

At its core, a minimum represents the lowest value within a given set of numbers or a function. This concept can be further refined by distinguishing between different types of minima, each with its own nuances and applications.

Global Minimum: The Absolute Lowest Point

A global minimum, also known as an absolute minimum, is the lowest value that a function or a dataset can attain over its entire domain or for all possible inputs. Imagine a perfectly smooth, bowl-shaped curve. The very bottom of that bowl represents the global minimum of the function defining the curve.

For a set of discrete numbers, the global minimum is simply the smallest number in that collection. For example, in the set {5, 2, 8, 1, 9}, the global minimum is 1.

In the context of functions, finding a global minimum often involves examining the function’s behavior across its entire domain. This can be a complex process, especially for functions with many variables or intricate shapes. For a function $f(x)$, a value $m$ is the global minimum if $f(x) ge m$ for all $x$ in the domain of $f$.

Local Minimum: A Valley Within the Landscape

While the global minimum is the ultimate lowest point, a function can have multiple “valleys” or dips. These are known as local minima. A local minimum is a point where the function’s value is lower than at all surrounding points within a specific neighborhood, but not necessarily the lowest value across the entire domain.

Consider a landscape with several hills and valleys. The lowest point in the entire landscape is the global minimum. However, each individual valley, even if it’s not the deepest one, represents a local minimum.

Mathematically, for a function $f(x)$, a point $c$ is a local minimum if there exists an open interval $I$ containing $c$ such that $f(x) ge f(c)$ for all $x$ in $I$. This means that as you move away from $c$ in any direction within that interval, the function’s value either stays the same or increases.

The Importance of Differentiation in Finding Minima

For continuous and differentiable functions, the concept of the derivative plays a crucial role in identifying potential local minima. The derivative of a function at a point indicates the slope of the tangent line at that point.

At a local minimum (and also a local maximum), the tangent line to the function is horizontal, meaning its slope is zero. Therefore, a necessary condition for a point $c$ to be a local extremum (either a minimum or a maximum) of a differentiable function $f(x)$ is that its first derivative, $f'(c)$, must be equal to zero. Such points are called critical points.

However, $f'(c) = 0$ does not guarantee a minimum. It could also be a maximum or an inflection point. To distinguish between these, we often use the second derivative test.

The Second Derivative Test: Confirming the Minimum

The second derivative of a function, denoted as $f”(x)$, represents the rate of change of the first derivative (the slope). Geometrically, the sign of the second derivative at a critical point can tell us about the curvature of the function:

- If $f”(c) > 0$: The function is concave up at $c$. This means the curve “holds water,” and the critical point $c$ corresponds to a local minimum.

- If $f”(c) < 0$: The function is concave down at $c$. This means the curve “spills water,” and the critical point $c$ corresponds to a local maximum.

- If $f”(c) = 0$: The second derivative test is inconclusive. The critical point could be a local minimum, a local maximum, or an inflection point. Further analysis, such as examining the behavior of the first derivative around $c$ or using higher-order derivatives, is required.

For functions with multiple variables, similar principles apply using partial derivatives and the Hessian matrix.

Minima in Practical Applications: Beyond the Abstract

The theoretical understanding of minima is vital, but their true power lies in their widespread application across diverse fields. From engineering to finance and, notably, in the advancement of technology, identifying and optimizing for minimum values is a recurring theme.

Optimization Problems: Finding the Best Outcome

Many real-world problems can be framed as optimization problems, where the goal is to find the best possible solution, often by minimizing a certain cost, error, or resource usage.

Example: Minimizing Cost in Manufacturing

A manufacturing company might want to minimize the cost of producing a certain number of units. This cost is often a function of various factors like raw material prices, labor, energy consumption, and production efficiency. By developing a mathematical model for the total cost, the company can then use calculus to find the combination of these factors that results in the lowest possible production cost. This involves finding the minimum of the cost function.

Example: Minimizing Error in Data Fitting

In data analysis and machine learning, a common task is to fit a model to a set of observed data. This often involves minimizing the difference, or error, between the model’s predictions and the actual data. Techniques like “least squares regression” aim to find the parameters of a model that minimize the sum of the squared errors. The underlying mathematical operation is to find the minimum of an error function.

Engineering Design: Ensuring Robustness and Efficiency

Engineers constantly strive to design systems that are both efficient and robust, often by minimizing specific undesirable outcomes.

Example: Minimizing Stress and Strain in Structures

When designing bridges, buildings, or aircraft components, engineers must ensure that the structures can withstand anticipated loads without failure. This involves analyzing stress and strain distributions within the material. Optimization techniques are used to minimize peak stresses or strains in critical areas, thereby enhancing the structural integrity and longevity of the design.

Example: Minimizing Energy Consumption

In the design of electrical devices, vehicles, and even urban infrastructure, minimizing energy consumption is a key objective. This translates to finding the operational parameters or design choices that result in the lowest energy usage for a given performance level. For instance, optimizing the aerodynamic profile of a car aims to minimize drag, which directly translates to reduced fuel consumption.

The Role of Minima in Technological Advancement

The concept of the minimum is not just a theoretical construct; it is an indispensable tool driving innovation and progress in cutting-edge technologies. From the sophisticated algorithms that power artificial intelligence to the precise control systems in advanced robotics, identifying and leveraging minima is fundamental.

Machine Learning and AI: The Heart of Optimization

Machine learning, the engine behind many AI applications, relies heavily on the concept of minimizing loss functions. When an AI model learns, it iteratively adjusts its internal parameters to reduce the “loss” or “error” between its predictions and the actual outcomes.

Supervised Learning: In tasks like image recognition or natural language processing, models are trained on vast datasets. A loss function quantifies how far the model’s output is from the correct label. The learning process is essentially an optimization problem where algorithms like gradient descent are used to find the set of model parameters that minimizes this loss function. The lower the loss, the better the model performs.

Unsupervised Learning: Even in unsupervised learning, where there are no explicit labels, algorithms often aim to minimize objectives such as reconstruction error (in autoencoders) or intra-cluster variance (in clustering algorithms), all of which are forms of finding minima.

Neural Networks: The training of deep neural networks, the backbone of modern AI, is a prime example. The network’s performance is measured by a loss function, and optimization algorithms work to find the minimum of this high-dimensional, complex function by adjusting millions of weights and biases. The identification of local minima can sometimes be a challenge, leading to research into advanced optimization techniques that can better navigate these complex landscapes to find near-global minima.

Robotics and Autonomous Systems: Precision and Efficiency

The development of intelligent robots and autonomous systems, such as self-driving cars and advanced drones, fundamentally depends on minimizing errors and optimizing performance.

Navigation and Path Planning: For a robot or a drone to navigate from point A to point B efficiently and safely, it must plan an optimal path. This often involves minimizing factors like travel time, energy expenditure, or the risk of collision. Algorithms like A* search or Dijkstra’s algorithm are used to find the shortest or most cost-effective path on a graph, which is a direct application of finding a minimum cost.

Control Systems: The sophisticated control systems that keep drones stable, enable autonomous vehicles to maintain their lane, or guide robotic arms with precision rely on minimizing deviations from desired states. For example, a drone’s flight controller aims to minimize the error between its intended altitude and current altitude, or its intended orientation and current orientation. This is achieved by continuously calculating corrective actions based on sensor feedback and minimizing errors within a feedback loop.

Sensor Fusion and Data Interpretation: When robots and autonomous systems gather data from multiple sensors (e.g., cameras, LiDAR, GPS), this data needs to be fused and interpreted. Techniques for sensor fusion often aim to minimize the uncertainty or discrepancy between readings from different sensors, leading to a more accurate and robust understanding of the environment.

Imaging and Signal Processing: Clarity and Reconstruction

In image and signal processing, the concept of minimum is vital for tasks ranging from noise reduction to signal reconstruction.

Image Denoising: Real-world images often suffer from noise, which degrades their quality. Image denoising algorithms aim to remove this noise while preserving important image features. Many denoising techniques involve minimizing a “cost function” that penalizes both the presence of noise and excessive smoothing of image edges.

Signal Reconstruction: In fields like medical imaging (e.g., MRI), signals are often acquired indirectly and incompletely. Reconstructing a clear image from this sparse data requires sophisticated algorithms that often employ minimization principles. For instance, compressed sensing techniques reconstruct signals by finding the sparsest possible solution that is consistent with the acquired measurements, effectively minimizing a sparsity-inducing norm.

In essence, the pursuit of minimizing errors, costs, risks, and inefficiencies is a driving force behind the relentless pace of technological advancement. The mathematical concept of the minimum, therefore, is not merely an academic exercise but a practical and powerful tool that underpins much of the innovation we witness today.