In the realm of digital imaging and aerial photography, “light” is often discussed in terms of aesthetics—composition, shadows, and golden hour hues. However, at its most fundamental level, light is not merely a visual medium but a form of electromagnetic radiation carrying specific packets of energy. Understanding what the energy of light is, and how it interacts with sophisticated camera hardware, is the cornerstone of mastering modern imaging technology. Whether you are operating a high-end cinema drone or a thermal mapping UAV, the transition from raw photons to a digital masterpiece is a complex journey of energy conversion.

The Physics of Light Energy and the Photoelectric Effect

To understand imaging, one must first understand the dual nature of light. Light behaves both as a wave and as a particle. In the context of digital sensors, we primarily concern ourselves with the particle aspect, where light is composed of “photons.” Each photon carries a discrete amount of energy, which is directly proportional to its electromagnetic frequency.

Photons as Discrete Packets of Energy

The concept of the photon was revolutionized by Albert Einstein’s explanation of the photoelectric effect. He posited that light energy is not a continuous stream but is quantized. This means that when we point a camera at a subject, we are essentially bombarding the sensor with billions of tiny “energy packets.” The energy ($E$) of a single photon is defined by the equation $E = hf$, where $h$ is Planck’s constant and $f$ is the frequency. For imaging professionals, this means that the “strength” of the signal received by a camera depends entirely on the energy levels of these incoming particles.

How Wavelength Influences Energy Levels

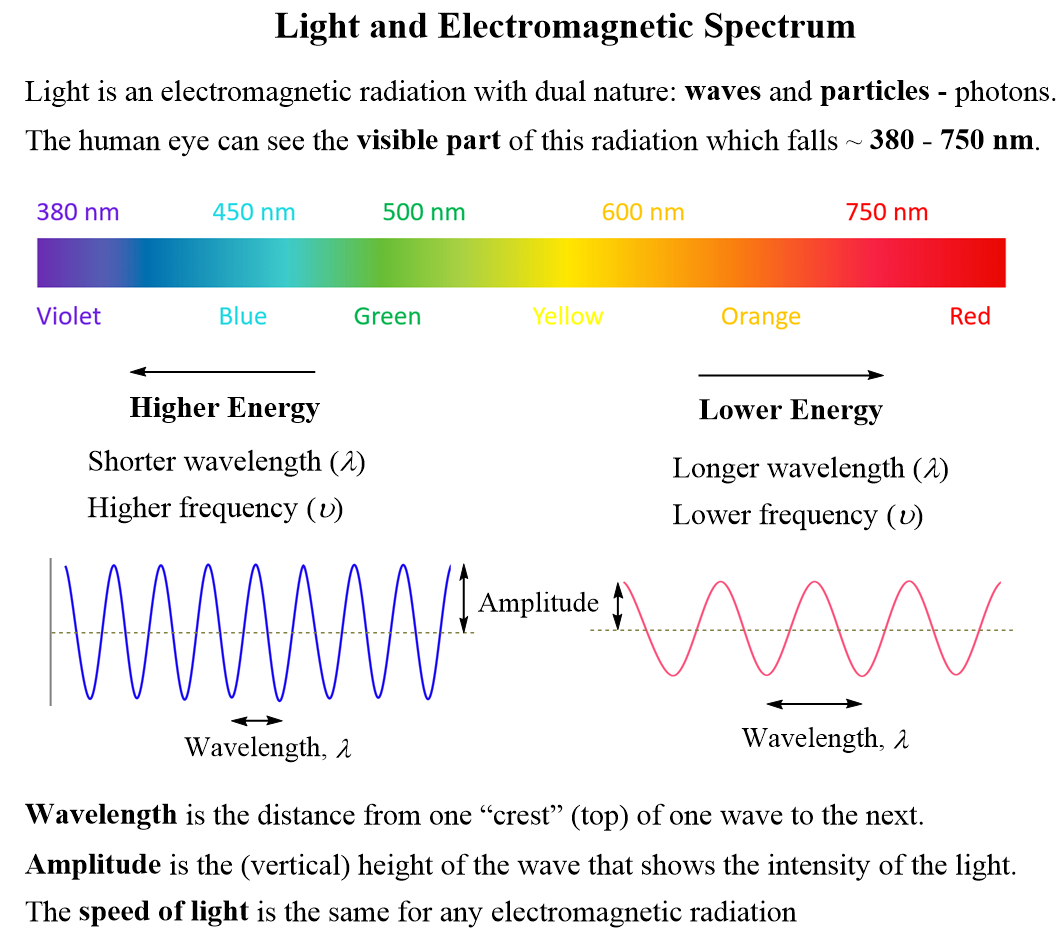

The visible spectrum consists of different colors, each corresponding to a specific wavelength and, consequently, a specific energy level. Blue and violet light have shorter wavelengths and higher frequencies, meaning they carry more energy per photon than red light, which has longer wavelengths and lower frequencies. In high-precision imaging, this energy variance is critical. Sensors must be calibrated to account for the fact that a “blue” photon provides a different energetic stimulus to a pixel than a “red” photon. This fundamental physics dictates everything from color science to the design of optical coatings on professional-grade lenses.

Translating Light Energy into Digital Data

The “energy of light” is useless for digital storage until it is converted into an electrical signal. This process happens at the image sensor, typically a CMOS (Complementary Metal-Oxide-Semiconductor) or CCD (Charge-Coupled Device) chip. This is where the physics of energy meets the engineering of silicon.

The Role of the Image Sensor: From Photons to Electrons

When a photon strikes the silicon surface of a sensor pixel (photodiode), its energy is absorbed. If the photon has enough energy—exceeding the “bandgap” of the silicon—it displaces an electron. This is the photoelectric effect in action. The number of electrons liberated is directly proportional to the amount of light energy hitting the pixel. These electrons are then collected in a “well” and converted into a voltage, which is eventually digitized into the bits and bytes that form an image. In essence, a digital camera is a device that counts the energy of light and translates it into a numerical value.

Full Well Capacity and Dynamic Range

A critical concept in imaging performance is “Full Well Capacity.” Think of each pixel as a bucket designed to catch the energy of light. Once the bucket is full of electrons, it can no longer record additional energy, leading to “blown-out” highlights or “clipping.” High-end imaging systems, such as those found on professional cinema drones, are designed with larger pixels or deeper wells to handle more energy. This allows for a higher “Dynamic Range”—the ability to simultaneously record the high energy of a bright sky and the low energy of a deep shadow without losing detail in either.

Managing Energy Intensity through Exposure Control

Understanding light as energy changes the way we view the “Exposure Triangle” (Aperture, Shutter Speed, and ISO). Rather than just artistic settings, these are mechanical and electronic gates that control the flow and accumulation of light energy.

Aperture and the Flux of Photons

The aperture of a lens functions as a physical throttle. By widening the iris (lower f-stop), you increase the “flux” or the volume of light energy reaching the sensor per unit of time. In low-light environments, where the energy of light is sparse, a wide aperture is essential to ensure enough photons hit the sensor to create a usable signal. Conversely, in the harsh energy of high-noon sun, the aperture must be constricted to prevent the sensor from being overwhelmed by an overabundance of energy.

Shutter Speed and Temporal Energy Collection

Shutter speed determines the duration for which the sensor is exposed to light energy. This is a temporal measurement of energy accumulation. A long shutter speed allows the sensor to “integrate” energy over time, which is why long-exposure shots can make a dark night look like day. However, in aerial imaging, shutter speed is also a tool for managing motion. Because drones are often in motion, the challenge is to collect enough energy to create a clear image without the sensor moving so much that the energy from a single point in space is smeared across multiple pixels, resulting in motion blur.

Spectral Energy and Color Accuracy

Light energy is not uniform; it is distributed across a spectrum. To produce the vivid, lifelike colors we expect from 4K and 8K cameras, the imaging system must accurately categorize the energy it receives.

The Bayer Filter and Chromatic Energy Distribution

Since silicon sensors are naturally color-blind—they only measure the quantity of energy, not the color—imaging systems use a Bayer Filter Mosaic. This is a grid of red, green, and blue filters placed over the pixels. Each filter only allows photons within a specific energy range to pass through. For example, the “red” filter blocks high-energy blue and green photons, allowing only the lower-energy red photons to reach the pixel below. The camera’s processor then uses complex algorithms (demosaicing) to reconstruct the full-color image based on the energy levels recorded behind each filter.

Beyond Visible Light: Thermal and Infrared Imaging

The “energy of light” extends far beyond what the human eye can perceive. Infrared radiation is simply light energy with a longer wavelength and lower frequency than red light. Thermal cameras, often used in industrial drone inspections, are specialized sensors designed to detect this long-wave infrared energy (heat). Instead of reflecting off surfaces like visible light, this energy is emitted by objects based on their temperature. Capturing this “invisible” energy requires specialized sensor materials, like Microbolometers, which react to the thermal energy of light rather than the visible energy.

Maximizing Image Quality via Photon Management

The ultimate goal of any imaging professional is to maximize the “Signal-to-Noise Ratio” (SNR). In the context of light energy, the “signal” is the useful energy from the subject, while “noise” is the unwanted electronic interference or random fluctuations in photon arrival.

Signal-to-Noise Ratio in Low Light

In environments where the energy of light is low (night-time or deep forests), the number of photons hitting the sensor is small. In these cases, the random nature of photon arrival—known as “shot noise”—becomes visible as grain. To combat this, modern sensors use “Back-Illuminated” (BSI) technology, which moves the sensor’s circuitry behind the light-collecting layer. This ensures that almost 100% of the available light energy is captured, rather than being blocked by the “wiring” of the chip. This efficiency is why modern compact drones can now produce clean footage in conditions that would have been impossible a decade ago.

Future Innovations: Quantum Sensors and Beyond

The future of imaging lies in even more precise management of light energy. We are moving toward “Quantum Sensors” and “Single-Photon Avalanche Diodes” (SPADs), which are capable of detecting the energy of a single individual photon. These technologies promise to eliminate noise entirely and allow for imaging in near-total darkness. Furthermore, global shutter technology is changing how energy is read off a sensor, ensuring that every pixel records energy at the exact same moment, eliminating the “jello effect” common in fast-moving aerial cinematography.

By viewing light not just as a visual phenomenon but as a quantifiable form of energy, we gain a deeper appreciation for the technology inside our cameras. From the physics of the photon to the chemistry of the silicon sensor and the mathematics of the image processor, every digital image is a testament to our ability to capture, measure, and manipulate the energy of light. For the drone pilot and the filmmaker, this knowledge is power—the power to push the limits of what can be captured from the sky.